Spring Batch is a lightweight, comprehensive batch framework designed to enable the development of robust batch applications vital for the daily operations of enterprise systems. Spring Batch builds upon the characteristics of the Spring Framework that people have come to expect (productivity, POJO-based development approach, and general ease of use), while making it easy for developers to access and leverage more advance enterprise services when necessary. Spring Batch is not a scheduling framework. There are many good enterprise schedulers (such as Quartz, Tivoli, Control-M, etc.) available in both the commercial and open source spaces. It is intended to work in conjunction with a scheduler, not replace a scheduler.

Spring Batch is also a minimalistic framework to run batch processing applications. It provides reusable functions that are essential in processing large volumes of records, including logging/tracing, transaction management, job processing statistics, job restart, skip, and resource management. It also provides more advanced technical services and features that will enable extremely high-volume and high-performance batch jobs through optimization and partitioning techniques. Simple as well as complex, high-volume batch jobs can leverage the framework in a highly scalable manner to process significant volumes of information.

Spring Batch provides reusable functions that are essential in processing large volumes of records, including logging/tracing, transaction management, job processing statistics, job restart, skip, and resource management. It also provides more advanced technical services and features that enable extremely high-volume and high performance batch jobs through optimization and partitioning techniques. Spring Batch can be used in both simple use cases (such as reading a file into a database or running a stored procedure) as well as complex, high volume use cases (such as moving high volumes of data between databases, transforming it, and so on). High-volume batch jobs can leverage the framework in a highly scalable manner to process significant volumes of information.

Usage Scenarios

A typical batch program generally:

- Reads a large number of records from a database, file, or queue.

- Processes the data in some fashion.

- Writes back data in a modified form.

- Write a large number of records into a database, file, or queue.

Spring Batch automates this basic batch iteration, providing the capability to process similar transactions as a set, typically in an offline environment without any user interaction. Batch jobs are part of most IT projects, and Spring Batch is the only open source framework that provides a robust, enterprise-scale solution.

Many applications within the enterprise domain require bulk processing to perform business operations in mission critical environments. These business operations include:

- Automated, complex processing of large volumes of information that is most efficiently processed without user interaction. These operations typically include time-based events (such as month-end calculations, notices, or correspondence).

- Periodic application of complex business rules processed repetitively across very large data sets (for example, insurance benefit determination or rate adjustments).

- Integration of information that is received from internal and external systems that typically requires formatting, validation, and processing in a transactional manner into the system of record. Batch processing is used to process billions of transactions every day for enterprises.

Technical Objectives

- Batch developers use the Spring programming model: Concentrate on business logic and let the framework take care of infrastructure.

- Clear separation of concerns between the infrastructure, the batch execution environment, and the batch application.

- Provide common, core execution services as interfaces that all projects can implement.

- Provide simple and default implementations of the core execution interfaces that can be used 'out of the box'.

- Easy to configure, customize, and extend services, by leveraging the spring framework in all layers.

- All existing core services should be easy to replace or extend, without any impact to the infrastructure layer.

- Provide a simple deployment model, with the architecture JARs completely separate from the application, built using Maven.

Spring Batch Architecture

The figure below shows the layered architecture that supports the extensibility and ease of use for end-user developers.

This layered architecture highlights three major high-level components: Application,

Core, and Infrastructure. The application contains all batch jobs and custom code written

by developers using Spring Batch. The Batch Core contains the core runtime classes

necessary to launch and control a batch job. It includes implementations for

JobLauncher, Job, and Step. Both Application and Core are built on top of a common

infrastructure.

The Domain Language of Batch

To any experienced batch architect, the overall concepts of batch processing used in

Spring Batch should be familiar and comfortable. There are "Jobs" and "Steps" and

developer-supplied processing units called ItemReader and ItemWriter.

The following diagram is a simplified version of the batch reference architecture that has been used for decades. It provides an overview of the components that make up the domain language of batch processing. This architecture framework is a blueprint that has been proven through decades of implementations on the last several generations of platforms (COBOL/Mainframe, C/Unix, and now Java/anywhere). JCL and COBOL developers are likely to be as comfortable with the concepts as C, C#, and Java developers. Spring Batch provides a physical implementation of the layers, components, and technical services commonly found in the robust, maintainable systems that are used to address the creation of simple to complex batch applications, with the infrastructure and extensions to address very complex processing needs.

The preceding diagram highlights the key concepts that make up the domain language of

Spring Batch. A Job has one to many steps, each of which has exactly one ItemReader,

one ItemProcessor, and one ItemWriter. A job needs to be launched (with

JobLauncher), and metadata about the currently running process needs to be stored (in

JobRepository).

Job

A Job is an

entity that encapsulates an entire batch process. As is common with other Spring

projects, a Job is wired together with either an XML configuration file or Java-based

configuration. This configuration may be referred to as the "job configuration". However,

Job is just the top of an overall hierarchy, as shown in the following diagram:

In Spring Batch, a Job is simply a container for Step instances. It combines multiple

steps that belong logically together in a flow and allows for configuration of properties

global to all steps, such as restartability. The job configuration contains:

- The simple name of the job.

- Definition and ordering of

Stepinstances. - Whether or not the job is restartable.

for example:

@Bean

public Job footballJob() {

return this.jobBuilderFactory.get("footballJob")

.start(playerLoad()) //step

.next(gameLoad()) //step

.next(playerSummarization()) /step

.build();

}.1. JobInstance

A JobInstance refers to the concept of a logical job run. Each JobInstance can have multiple

executions (JobExecution is discussed in more detail later in this chapter), and only

one JobInstance corresponding to a particular Job and identifying JobParameters can

run at a given time.

The definition of a JobInstance has absolutely no bearing on the data to be loaded.

It is entirely up to the ItemReader implementation to determine how data is loaded. Using a new JobInstance means 'start from the

beginning', and using an existing instance generally means 'start from where you left

off'.

.2. JobParameters

Having discussed JobInstance and how it differs from Job, the natural question to ask

is: "How is one JobInstance distinguished from another?" The answer is:

JobParameters. A JobParameters object holds a set of parameters used to start a batch

job. They can be used for identification or even as reference data during the run, as

shown in the following image:

In the preceding example, where there are two instances, one for January 1st, and another

for January 2nd, there is really only one Job, but it has two JobParameter objects:

one that was started with a job parameter of 01-01-2017 and another that was started with

a parameter of 01-02-2017. Thus, the contract can be defined as: JobInstance = Job

+ identifying JobParameters. This allows a developer to effectively control how a

JobInstance is defined, since they control what parameters are passed in.

Not all job parameters are required to contribute to the identification of a

JobInstance. By default, they do so. However, the framework also allows the submission

of a Job with parameters that do not contribute to the identity of a JobInstance.

|

.3. JobExecution

A JobExecution refers to the technical concept of a single attempt to run a Job. An

execution may end in failure or success, but the JobInstance corresponding to a given

execution is not considered to be complete unless the execution completes successfully.

Using the EndOfDay Job described previously as an example, consider a JobInstance for

01-01-2017 that failed the first time it was run. If it is run again with the same

identifying job parameters as the first run (01-01-2017), a new JobExecution is

created. However, there is still only one JobInstance.

Step

A Step is a domain object that encapsulates an independent, sequential phase of a batch

job. Therefore, every Job is composed entirely of one or more steps. A Step contains

all of the information necessary to define and control the actual batch processing. As

with a Job, a Step has an individual StepExecution that correlates with a unique

JobExecution, as shown in the following image:

1. StepExecution

A StepExecution represents a single attempt to execute a Step. A new StepExecution

is created each time a Step is run, similar to JobExecution. However, if a step fails

to execute because the step before it fails, no execution is persisted for it. A

StepExecution is created only when its Step is actually started.

Step executions are represented by objects of the StepExecution class. Each execution

contains a reference to its corresponding step and JobExecution and transaction related

data, such as commit and rollback counts and start and end times. Additionally, each step

execution contains an ExecutionContext, which contains any data a developer needs to

have persisted across batch runs, such as statistics or state information needed to

restart. The following table lists the properties for StepExecution:

Property |

Definition |

Status |

A |

startTime |

A |

endTime |

A |

exitStatus |

The |

executionContext |

The "property bag" containing any user data that needs to be persisted between executions. |

readCount |

The number of items that have been successfully read. |

writeCount |

The number of items that have been successfully written. |

commitCount |

The number of transactions that have been committed for this execution. |

rollbackCount |

The number of times the business transaction controlled by the |

readSkipCount |

The number of times |

processSkipCount |

The number of times |

filterCount |

The number of items that have been 'filtered' by the |

writeSkipCount |

The number of times |

ExecutionContext

An ExecutionContext represents a collection of key/value pairs that are persisted and

controlled by the framework in order to allow developers a place to store persistent

state that is scoped to a StepExecution object or a JobExecution object. For those

familiar with Quartz, it is very similar to JobDataMap. The best usage example is to

facilitate restart. Using flat file input as an example, while processing individual

lines, the framework periodically persists the ExecutionContext at commit points. Doing

so allows the ItemReader to store its state in case a fatal error occurs during the run

or even if the power goes out. All that is needed is to put the current number of lines

read into the context, as shown in the following example, and the framework will do the

rest:

executionContext.putLong(getKey(LINES_READ_COUNT), reader.getPosition());Using the EndOfDay example from the Job Stereotypes section as an example, assume there

is one step, 'loadData', that loads a file into the database.

It is also important to note that there is at least one ExecutionContext per

JobExecution and one for every StepExecution. For example, consider the following

code snippet:

ExecutionContext ecStep = stepExecution.getExecutionContext();

ExecutionContext ecJob = jobExecution.getExecutionContext();

//ecStep does not equal ecJobAs noted in the comment, ecStep does not equal ecJob. They are two different

ExecutionContexts. The one scoped to the Step is saved at every commit point in the

Step, whereas the one scoped to the Job is saved in between every Step execution.

JobRepository

JobRepository is the persistence mechanism for all of the Stereotypes mentioned above.

It provides CRUD operations for JobLauncher, Job, and Step implementations. When a

Job is first launched, a JobExecution is obtained from the repository, and, during

the course of execution, StepExecution and JobExecution implementations are persisted

by passing them to the repository.

When using Java configuration, the @EnableBatchProcessing annotation provides a

JobRepository as one of the components automatically configured out of the box.

JobLauncher

JobLauncher represents a simple interface for launching a Job with a given set of

JobParameters, as shown in the following example:

public interface JobLauncher {

public JobExecution run(Job job, JobParameters jobParameters)

throws JobExecutionAlreadyRunningException, JobRestartException,

JobInstanceAlreadyCompleteException, JobParametersInvalidException;

}It is expected that implementations obtain a valid JobExecution from the

JobRepository and execute the Job.

Item Reader

ItemReader is an abstraction that represents the retrieval of input for a Step, one

item at a time. When the ItemReader has exhausted the items it can provide, it

indicates this by returning null. More details about the ItemReader interface and its

various implementations can be found in

Readers And Writers.

Item Writer

ItemWriter is an abstraction that represents the output of a Step, one batch or chunk

of items at a time. Generally, an ItemWriter has no knowledge of the input it should

receive next and knows only the item that was passed in its current invocation. More

details about the ItemWriter interface and its various implementations can be found in

Readers And Writers.

Item Processor

ItemProcessor is an abstraction that represents the business processing of an item.

While the ItemReader reads one item, and the ItemWriter writes them, the

ItemProcessor provides an access point to transform or apply other business processing.

If, while processing the item, it is determined that the item is not valid, returning

null indicates that the item should not be written out. More details about the

ItemProcessor interface can be found in

Readers And Writers.

Configuring and Running a Job

In the domain section , the overall architecture design was discussed, using the following diagram as a guide:

While the Job object may seem like a simple

container for steps, there are many configuration options of which a

developer must be aware. Furthermore, there are many considerations for

how a Job will be run and how its meta-data will be

stored during that run. This chapter will explain the various configuration

options and runtime concerns of a Job.

.1. Configuring a Job

There are multiple implementations of the Job interface. However,

builders abstract away the difference in configuration.

@Bean

public Job footballJob() {

return this.jobBuilderFactory.get("footballJob")

.start(playerLoad())

.next(gameLoad())

.next(playerSummarization())

.build();

}A Job (and typically any Step within it) requires a JobRepository. The

configuration of the JobRepository is handled via the BatchConfigurer.

The above example illustrates a Job that consists of three Step instances. The job related

builders can also contain other elements that help with parallelisation (Split),

declarative flow control (Decision) and externalization of flow definitions (Flow).

.1.1. Restartability

One key issue when executing a batch job concerns the behavior of a Job when it is

restarted. The launching of a Job is considered to be a 'restart' if a JobExecution

already exists for the particular JobInstance. Ideally, all jobs should be able to start

up where they left off, but there are scenarios where this is not possible. It is

entirely up to the developer to ensure that a new JobInstance is created in this

scenario. However, Spring Batch does provide some help. If a Job should never be

restarted, but should always be run as part of a new JobInstance, then the

restartable property may be set to 'false'.

The following example shows how to set the restartable field to false in Java:

@Bean

public Job footballJob() {

return this.jobBuilderFactory.get("footballJob")

.preventRestart()

...

.build();

}To phrase it another way, setting restartable to false means “this

Job does not support being started again”. Restarting a Job that is not

restartable causes a JobRestartException to

be thrown.

Job job = new SimpleJob();

job.setRestartable(false);

JobParameters jobParameters = new JobParameters();

JobExecution firstExecution = jobRepository.createJobExecution(job, jobParameters);

jobRepository.saveOrUpdate(firstExecution);

try {

jobRepository.createJobExecution(job, jobParameters);

fail();

}

catch (JobRestartException e) {

// expected

}This snippet of JUnit code shows how attempting to create a

JobExecution the first time for a non restartable

job will cause no issues. However, the second

attempt will throw a JobRestartException.

.1.2. Intercepting Job Execution

During the course of the execution of a

Job, it may be useful to be notified of various

events in its lifecycle so that custom code may be executed. The

SimpleJob allows for this by calling a

JobListener at the appropriate time:

public interface JobExecutionListener {

void beforeJob(JobExecution jobExecution);

void afterJob(JobExecution jobExecution);

}JobListeners can be added to a SimpleJob by setting listeners on the job.

The following example shows how to add a listener method to a Java job definition:

@Bean

public Job footballJob() {

return this.jobBuilderFactory.get("footballJob")

.listener(sampleListener())

...

.build();

}It should be noted that the afterJob method is called regardless of the success or

failure of the Job. If success or failure needs to be determined, it can be obtained

from the JobExecution, as follows:

public void afterJob(JobExecution jobExecution){

if (jobExecution.getStatus() == BatchStatus.COMPLETED ) {

//job success

}

else if (jobExecution.getStatus() == BatchStatus.FAILED) {

//job failure

}

}The annotations corresponding to this interface are:

- @BeforeJob

- @AfterJob

.2. JobParametersValidator

A job declared in the XML namespace or using any subclass of

AbstractJob can optionally declare a validator for the job parameters at

runtime. This is useful when for instance you need to assert that a job

is started with all its mandatory parameters. There is a

DefaultJobParametersValidator that can be used to constrain combinations

of simple mandatory and optional parameters, and for more complex

constraints you can implement the interface yourself.

The configuration of a validator is supported through the java builders, as shown in the following example:

@Bean

public Job job1() {

return this.jobBuilderFactory.get("job1")

.validator(parametersValidator())

...

.build();

}.3 Java Config

Spring 3 brought the ability to configure applications via java instead of XML. As of

Spring Batch 2.2.0, batch jobs can be configured using the same java config.

There are two components for the java based configuration: the @EnableBatchProcessing

annotation and two builders.

The @EnableBatchProcessing works similarly to the other @Enable* annotations in the

Spring family. In this case, @EnableBatchProcessing provides a base configuration for

building batch jobs. Within this base configuration, an instance of StepScope is

created in addition to a number of beans made available to be autowired:

JobRepository: bean name "jobRepository"JobLauncher: bean name "jobLauncher"JobRegistry: bean name "jobRegistry"PlatformTransactionManager: bean name "transactionManager"JobBuilderFactory: bean name "jobBuilders"StepBuilderFactory: bean name "stepBuilders"

The core interface for this configuration is the BatchConfigurer. The default

implementation provides the beans mentioned above and requires a DataSource as a bean

within the context to be provided. This data source is used by the JobRepository.

You can customize any of these beans

by creating a custom implementation of the BatchConfigurer interface.

Typically, extending the DefaultBatchConfigurer (which is provided if a

BatchConfigurer is not found) and overriding the required getter is sufficient.

However, implementing your own from scratch may be required. The following

example shows how to provide a custom transaction manager:

@Bean

public BatchConfigurer batchConfigurer(DataSource dataSource) {

return new DefaultBatchConfigurer(dataSource) {

@Override

public PlatformTransactionManager getTransactionManager() {

return new MyTransactionManager();

}

};

}|

Only one configuration class needs to have the |

With the base configuration in place, a user can use the provided builder factories to

configure a job. The following example shows a two step job configured with the

JobBuilderFactory and the StepBuilderFactory:

@Configuration

@EnableBatchProcessing

@Import(DataSourceConfiguration.class)

public class AppConfig {

@Autowired

private JobBuilderFactory jobs;

@Autowired

private StepBuilderFactory steps;

@Bean

public Job job(@Qualifier("step1") Step step1, @Qualifier("step2") Step step2) {

return jobs.get("myJob").start(step1).next(step2).build();

}

@Bean

protected Step step1(ItemReader<Person> reader,

ItemProcessor<Person, Person> processor,

ItemWriter<Person> writer) {

return steps.get("step1")

.<Person, Person> chunk(10)

.reader(reader)

.processor(processor)

.writer(writer)

.build();

}

@Bean

protected Step step2(Tasklet tasklet) {

return steps.get("step2")

.tasklet(tasklet)

.build();

}

}.4 Configuring a JobRepository

When using @EnableBatchProcessing, a JobRepository is provided out of the box for you.

This section addresses configuring your own.

As described in earlier, the JobRepository is used for basic CRUD operations of the various persisted

domain objects within Spring Batch, such as

JobExecution and

StepExecution. It is required by many of the major

framework features, such as the JobLauncher,

Job, and Step.

When using java configuration, a JobRepository is provided for you. A JDBC based one is

provided out of the box if a DataSource is provided, the Map based one if not. However,

you can customize the configuration of the JobRepository through an implementation of the

BatchConfigurer interface.

...

// This would reside in your BatchConfigurer implementation

@Override

protected JobRepository createJobRepository() throws Exception {

JobRepositoryFactoryBean factory = new JobRepositoryFactoryBean();

factory.setDataSource(dataSource);

factory.setTransactionManager(transactionManager);

factory.setIsolationLevelForCreate("ISOLATION_SERIALIZABLE");

factory.setTablePrefix("BATCH_");

factory.setMaxVarCharLength(1000);

return factory.getObject();

}

...None of the configuration options listed above are required except

the dataSource and transactionManager. If they are not set, the defaults shown above

will be used. They are shown above for awareness purposes. The

max varchar length defaults to 2500, which is the

length of the long VARCHAR columns in the

sample schema scripts

.5. Configuring a JobLauncher

When using @EnableBatchProcessing, a JobRegistry is provided out of the box for you.

This section addresses configuring your own.

The most basic implementation of the JobLauncher interface is the SimpleJobLauncher.

Its only required dependency is a JobRepository, in order to obtain an execution.

The following example shows a SimpleJobLauncher in Java:

...

// This would reside in your BatchConfigurer implementation

@Override

protected JobLauncher createJobLauncher() throws Exception {

SimpleJobLauncher jobLauncher = new SimpleJobLauncher();

jobLauncher.setJobRepository(jobRepository);

jobLauncher.afterPropertiesSet();

return jobLauncher;

}

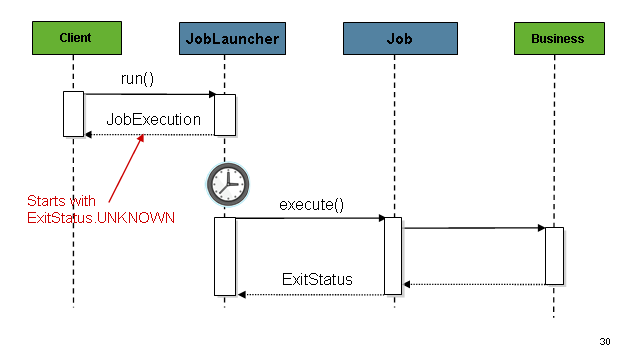

...Once a JobExecution is obtained, it is passed to the

execute method of Job, ultimately returning the JobExecution to the caller, as shown

in the following image:

The sequence is straightforward and works well when launched from a scheduler. However,

issues arise when trying to launch from an HTTP request. In this scenario, the launching

needs to be done asynchronously so that the SimpleJobLauncher returns immediately to its

caller. This is because it is not good practice to keep an HTTP request open for the

amount of time needed by long running processes such as batch. The following image shows

an example sequence:

The SimpleJobLauncher can be configured to allow for this scenario by configuring a

TaskExecutor.

The following Java example shows a SimpleJobLauncher configured to return immediately:

@Bean

public JobLauncher jobLauncher() {

SimpleJobLauncher jobLauncher = new SimpleJobLauncher();

jobLauncher.setJobRepository(jobRepository());

jobLauncher.setTaskExecutor(new SimpleAsyncTaskExecutor());

jobLauncher.afterPropertiesSet();

return jobLauncher;

}Any implementation of the spring TaskExecutor

interface can be used to control how jobs are asynchronously

executed.

.6. Running a Job

At a minimum, launching a batch job requires two things: the

Job to be launched and a

JobLauncher. Both can be contained within the same

context or different contexts. For example, if launching a job from the

command line, a new JVM will be instantiated for each Job, and thus every

job will have its own JobLauncher. However, if

running from within a web container within the scope of an

HttpRequest, there will usually be one

JobLauncher, configured for asynchronous job

launching, that multiple requests will invoke to launch their jobs.

.6.1. Running Jobs from the Command Line

For users that want to run their jobs from an enterprise scheduler, the command line is the primary interface. This is because most schedulers (with the exception of Quartz unless using the NativeJob) work directly with operating system processes, primarily kicked off with shell scripts. There are many ways to launch a Java process besides a shell script, such as Perl, Ruby, or even 'build tools' such as ant or maven. However, because most people are familiar with shell scripts, this example will focus on them.

The CommandLineJobRunner

Because the script launching the job must kick off a Java

Virtual Machine, there needs to be a class with a main method to act

as the primary entry point. Spring Batch provides an implementation

that serves just this purpose:

CommandLineJobRunner. It’s important to note

that this is just one way to bootstrap your application, but there are

many ways to launch a Java process, and this class should in no way be

viewed as definitive. The CommandLineJobRunner

performs four tasks:

-

Load the appropriate

ApplicationContext - Parse command line arguments into

JobParameters - Locate the appropriate job based on arguments

- Use the

JobLauncherprovided in the application context to launch the job.

All of these tasks are accomplished using only the arguments passed in. The following are required arguments:

jobPath |

The location of the XML file that will be used to

create an |

jobName |

The name of the job to be run. |

These arguments must be passed in with the path first and the name second. All arguments after these are considered to be job parameters, are turned into a JobParameters object, and must be in the format of 'name=value'.

The following example shows a date passed as a job parameter to a job defined in Java:

<bash$ java CommandLineJobRunner io.spring.EndOfDayJobConfiguration endOfDay schedule.date(date)=2007/05/05|

By default, the In the following example, This behaviour can be overridden by using a custom |

In most cases you would want to use a manifest to declare your main class in a jar, but,

for simplicity, the class was used directly. This example is using the same 'EndOfDay'

example from the domainLanguageOfBatch. The first

argument is 'io.spring.EndOfDayJobConfiguration', which is the fully qualified class name

to the configuration class containing the Job. The second argument, 'endOfDay' represents

the job name. The final argument, 'schedule.date(date)=2007/05/05' is converted into a

JobParameters object. An example of the java configuration follows:

The following example shows a sample configuration for endOfDay in Java:

@Configuration

@EnableBatchProcessing

public class EndOfDayJobConfiguration {

@Autowired

private JobBuilderFactory jobBuilderFactory;

@Autowired

private StepBuilderFactory stepBuilderFactory;

@Bean

public Job endOfDay() {

return this.jobBuilderFactory.get("endOfDay")

.start(step1())

.build();

}

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.tasklet((contribution, chunkContext) -> null)

.build();

}

}The preceding example is overly simplistic, since there are many more requirements to a

run a batch job in Spring Batch in general, but it serves to show the two main

requirements of the CommandLineJobRunner: Job and JobLauncher.

ExitCodes

When launching a batch job from the command-line, an enterprise

scheduler is often used. Most schedulers are fairly dumb and work only

at the process level. This means that they only know about some

operating system process such as a shell script that they’re invoking.

In this scenario, the only way to communicate back to the scheduler

about the success or failure of a job is through return codes. A

return code is a number that is returned to a scheduler by the process

that indicates the result of the run. In the simplest case: 0 is

success and 1 is failure. However, there may be more complex

scenarios: If job A returns 4 kick off job B, and if it returns 5 kick

off job C. This type of behavior is configured at the scheduler level,

but it is important that a processing framework such as Spring Batch

provide a way to return a numeric representation of the 'Exit Code'

for a particular batch job. In Spring Batch this is encapsulated

within an ExitStatus, which is covered in more

detail in Chapter 5. For the purposes of discussing exit codes, the

only important thing to know is that an

ExitStatus has an exit code property that is

set by the framework (or the developer) and is returned as part of the

JobExecution returned from the

JobLauncher. The

CommandLineJobRunner converts this string value

to a number using the ExitCodeMapper

interface:

public interface ExitCodeMapper {

public int intValue(String exitCode);

}The essential contract of an

ExitCodeMapper is that, given a string exit

code, a number representation will be returned. The default

implementation used by the job runner is the SimpleJvmExitCodeMapper

that returns 0 for completion, 1 for generic errors, and 2 for any job

runner errors such as not being able to find a

Job in the provided context. If anything more

complex than the 3 values above is needed, then a custom

implementation of the ExitCodeMapper interface

must be supplied. Because the

CommandLineJobRunner is the class that creates

an ApplicationContext, and thus cannot be

'wired together', any values that need to be overwritten must be

autowired. This means that if an implementation of

ExitCodeMapper is found within the BeanFactory,

it will be injected into the runner after the context is created. All

that needs to be done to provide your own

ExitCodeMapper is to declare the implementation

as a root level bean and ensure that it is part of the

ApplicationContext that is loaded by the

runner.

.6.2. Running Jobs from within a Web Container

Historically, offline processing such as batch jobs have been

launched from the command-line, as described above. However, there are

many cases where launching from an HttpRequest is

a better option. Many such use cases include reporting, ad-hoc job

running, and web application support. Because a batch job by definition

is long running, the most important concern is ensuring to launch the

job asynchronously:

The controller in this case is a Spring MVC controller. More

information on Spring MVC can be found here: https://docs.spring.io/spring/docs/current/spring-framework-reference/web.html#mvc.

The controller launches a Job using a

JobLauncher that has been configured to launch

asynchronously, which

immediately returns a JobExecution. The

Job will likely still be running, however, this

nonblocking behaviour allows the controller to return immediately, which

is required when handling an HttpRequest. An

example is below:

@Controller

public class JobLauncherController {

@Autowired

JobLauncher jobLauncher;

@Autowired

Job job;

@RequestMapping("/jobLauncher.html")

public void handle() throws Exception{

jobLauncher.run(job, new JobParameters());

}

}.7. Advanced Meta-Data Usage

So far, both the JobLauncher and JobRepository interfaces have been

discussed. Together, they represent simple launching of a job, and basic

CRUD operations of batch domain objects:

A JobLauncher uses the

JobRepository to create new

JobExecution objects and run them.

Job and Step implementations

later use the same JobRepository for basic updates

of the same executions during the running of a Job.

The basic operations suffice for simple scenarios, but in a large batch

environment with hundreds of batch jobs and complex scheduling

requirements, more advanced access of the meta data is required:

The JobExplorer and

JobOperator interfaces, which will be discussed

below, add additional functionality for querying and controlling the meta

data.

Configuring a Step

As discussed in the domain chapter, a Step is a

domain object that encapsulates an independent, sequential phase of a batch job and

contains all of the information necessary to define and control the actual batch

processing. This is a necessarily vague description because the contents of any given

Step are at the discretion of the developer writing a Job. A Step can be as simple

or complex as the developer desires. A simple Step might load data from a file into the

database, requiring little or no code (depending upon the implementations used). A more

complex Step might have complicated business rules that are applied as part of the

processing, as shown in the following image:

. Chunk-oriented Processing

Spring Batch uses a 'Chunk-oriented' processing style within its most common

implementation. Chunk oriented processing refers to reading the data one at a time and

creating 'chunks' that are written out within a transaction boundary. Once the number of

items read equals the commit interval, the entire chunk is written out by the

ItemWriter, and then the transaction is committed. The following image shows the

process:

The following pseudo code shows the same concepts in a simplified form:

List items = new Arraylist();

for(int i = 0; i < commitInterval; i++){

Object item = itemReader.read();

if (item != null) {

items.add(item);

}

}

itemWriter.write(items);A chunk-oriented step can also be configured with an optional ItemProcessor

to process items before passing them to the ItemWriter. The following image

shows the process when an ItemProcessor is registered in the step:

The following pseudo code shows how this is implemented in a simplified form:

List items = new Arraylist();

for(int i = 0; i < commitInterval; i++){

Object item = itemReader.read();

if (item != null) {

items.add(item);

}

}

List processedItems = new Arraylist();

for(Object item: items){

Object processedItem = itemProcessor.process(item);

if (processedItem != null) {

processedItems.add(processedItem);

}

}

itemWriter.write(processedItems);For more details about item processors and their use cases, please refer to the Item processing section.

. Configuring a Step

Despite the relatively short list of required dependencies for a Step, it is an

extremely complex class that can potentially contain many collaborators.

When using Java configuration, the Spring Batch builders can be used, as shown in the following example:

/**

* Note the JobRepository is typically autowired in and not needed to be explicitly

* configured

*/

@Bean

public Job sampleJob(JobRepository jobRepository, Step sampleStep) {

return this.jobBuilderFactory.get("sampleJob")

.repository(jobRepository)

.start(sampleStep)

.build();

}

/**

* Note the TransactionManager is typically autowired in and not needed to be explicitly

* configured

*/

@Bean

public Step sampleStep(PlatformTransactionManager transactionManager) {

return this.stepBuilderFactory.get("sampleStep")

.transactionManager(transactionManager)

.<String, String>chunk(10)

.reader(itemReader())

.writer(itemWriter())

.build();

}The configuration above includes the only required dependencies to create a item-oriented step:

reader: TheItemReaderthat provides items for processing.writer: TheItemWriterthat processes the items provided by theItemReader.transactionManager: Spring’sPlatformTransactionManagerthat begins and commits transactions during processing.repository: The The Java-specific name of theJobRepositorythat periodically stores theStepExecutionandExecutionContextduring processing (just before committing).chunk: The XML-specific name of the dependency that indicates that this is an item-based step and the number of items to be processed before the transaction is committed.

It should be noted that repository defaults to jobRepository and transactionManager

defaults to transactionManager (all provided through the infrastructure from

@EnableBatchProcessing). Also, the ItemProcessor is optional, since the item could be

directly passed from the reader to the writer.

. The Commit Interval

As mentioned previously, a step reads in and writes out items, periodically committing

using the supplied PlatformTransactionManager. With a commit-interval of 1, it

commits after writing each individual item. This is less than ideal in many situations,

since beginning and committing a transaction is expensive. Ideally, it is preferable to

process as many items as possible in each transaction, which is completely dependent upon

the type of data being processed and the resources with which the step is interacting.

For this reason, the number of items that are processed within a commit can be

configured.

The following example shows a step whose tasklet has a commit-interval

value of 10 as it would be defined in Java:

@Bean

public Job sampleJob() {

return this.jobBuilderFactory.get("sampleJob")

.start(step1())

.build();

}

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(10)

.reader(itemReader())

.writer(itemWriter())

.build();

}In the preceding example, 10 items are processed within each transaction. At the

beginning of processing, a transaction is begun. Also, each time read is called on the

ItemReader, a counter is incremented. When it reaches 10, the list of aggregated items

is passed to the ItemWriter, and the transaction is committed.

. Configuring a Step for Restart

In the "Configuring and Running a Job" section , restarting a

Job was discussed. Restart has numerous impacts on steps, and, consequently, may

require some specific configuration.

Setting a Start Limit

There are many scenarios where you may want to control the number of times a Step may

be started. For example, a particular Step might need to be configured so that it only

runs once because it invalidates some resource that must be fixed manually before it can

be run again. This is configurable on the step level, since different steps may have

different requirements. A Step that may only be executed once can exist as part of the

same Job as a Step that can be run infinitely.

The following code fragment shows an example of a start limit configuration in Java:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(10)

.reader(itemReader())

.writer(itemWriter())

.startLimit(1)

.build();

}The step shown in the preceding example can be run only once. Attempting to run it again

causes a StartLimitExceededException to be thrown. Note that the default value for the

start-limit is Integer.MAX_VALUE.

Restarting a Completed Step

In the case of a restartable job, there may be one or more steps that should always be

run, regardless of whether or not they were successful the first time. An example might

be a validation step or a Step that cleans up resources before processing. During

normal processing of a restarted job, any step with a status of 'COMPLETED', meaning it

has already been completed successfully, is skipped. Setting allow-start-if-complete to

"true" overrides this so that the step always runs.

The following code fragment shows how to define a restartable job in Java:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(10)

.reader(itemReader())

.writer(itemWriter())

.allowStartIfComplete(true)

.build();

}Step Restart Configuration Example

The following Java example shows how to configure a job to have steps that can be restarted:

@Bean

public Job footballJob() {

return this.jobBuilderFactory.get("footballJob")

.start(playerLoad())

.next(gameLoad())

.next(playerSummarization())

.build();

}

@Bean

public Step playerLoad() {

return this.stepBuilderFactory.get("playerLoad")

.<String, String>chunk(10)

.reader(playerFileItemReader())

.writer(playerWriter())

.build();

}

@Bean

public Step gameLoad() {

return this.stepBuilderFactory.get("gameLoad")

.allowStartIfComplete(true)

.<String, String>chunk(10)

.reader(gameFileItemReader())

.writer(gameWriter())

.build();

}

@Bean

public Step playerSummarization() {

return this.stepBuilderFactory.get("playerSummarization")

.startLimit(2)

.<String, String>chunk(10)

.reader(playerSummarizationSource())

.writer(summaryWriter())

.build();

}. Configuring Skip Logic

There are many scenarios where errors encountered while processing should not result in

Step failure, but should be skipped instead. This is usually a decision that must be

made by someone who understands the data itself and what meaning it has. Financial data,

for example, may not be skippable because it results in money being transferred, which

needs to be completely accurate. Loading a list of vendors, on the other hand, might

allow for skips. If a vendor is not loaded because it was formatted incorrectly or was

missing necessary information, then there probably are not issues. Usually, these bad

records are logged as well, which is covered later when discussing listeners.

The following Java example shows an example of using a skip limit:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(10)

.reader(flatFileItemReader())

.writer(itemWriter())

.faultTolerant()

.skipLimit(10)

.skip(FlatFileParseException.class)

.build();

}In the preceding example, a FlatFileItemReader is used. If, at any point, a

FlatFileParseException is thrown, the item is skipped and counted against the total

skip limit of 10. Exceptions (and their subclasses) that are declared might be thrown

during any phase of the chunk processing (read, process, write) but separate counts

are made of skips on read, process, and write inside

the step execution, but the limit applies across all skips. Once the skip limit is

reached, the next exception found causes the step to fail. In other words, the eleventh

skip triggers the exception, not the tenth.

One problem with the preceding example is that any other exception besides a

FlatFileParseException causes the Job to fail. In certain scenarios, this may be the

correct behavior. However, in other scenarios, it may be easier to identify which

exceptions should cause failure and skip everything else.

The following Java example shows an example excluding a particular exception:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(10)

.reader(flatFileItemReader())

.writer(itemWriter())

.faultTolerant()

.skipLimit(10)

.skip(Exception.class)

.noSkip(FileNotFoundException.class)

.build();

}By identifying java.lang.Exception as a skippable exception class, the configuration

indicates that all Exceptions are skippable. However, by 'excluding'

java.io.FileNotFoundException, the configuration refines the list of skippable

exception classes to be all Exceptions except FileNotFoundException. Any excluded

exception classes is fatal if encountered (that is, they are not skipped).

For any exception encountered, the skippability is determined by the nearest superclass in the class hierarchy. Any unclassified exception is treated as 'fatal'.

The order of the skip and noSkip method calls does not matter.

. Configuring Retry Logic

In most cases, you want an exception to cause either a skip or a Step failure. However,

not all exceptions are deterministic. If a FlatFileParseException is encountered while

reading, it is always thrown for that record. Resetting the ItemReader does not help.

However, for other exceptions, such as a DeadlockLoserDataAccessException, which

indicates that the current process has attempted to update a record that another process

holds a lock on. Waiting and trying again might result in success.

In Java, retry should be configured as follows:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(2)

.reader(itemReader())

.writer(itemWriter())

.faultTolerant()

.retryLimit(3)

.retry(DeadlockLoserDataAccessException.class)

.build();

}The Step allows a limit for the number of times an individual item can be retried and a

list of exceptions that are 'retryable'. More details on how retry works can be found in

retry.

. Controlling Rollback

By default, regardless of retry or skip, any exceptions thrown from the ItemWriter

cause the transaction controlled by the Step to rollback. If skip is configured as

described earlier, exceptions thrown from the ItemReader do not cause a rollback.

However, there are many scenarios in which exceptions thrown from the ItemWriter should

not cause a rollback, because no action has taken place to invalidate the transaction.

For this reason, the Step can be configured with a list of exceptions that should not

cause rollback.

In Java, you can control rollback as follows:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(2)

.reader(itemReader())

.writer(itemWriter())

.faultTolerant()

.noRollback(ValidationException.class)

.build();

}Transactional Readers

The basic contract of the ItemReader is that it is forward only. The step buffers

reader input, so that in the case of a rollback, the items do not need to be re-read

from the reader. However, there are certain scenarios in which the reader is built on

top of a transactional resource, such as a JMS queue. In this case, since the queue is

tied to the transaction that is rolled back, the messages that have been pulled from the

queue are put back on. For this reason, the step can be configured to not buffer the

items.

The following example shows how to create reader that does not buffer items in Java:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(2)

.reader(itemReader())

.writer(itemWriter())

.readerIsTransactionalQueue()

.build();

}. Transaction Attributes

Transaction attributes can be used to control the isolation, propagation, and

timeout settings. More information on setting transaction attributes can be found in

the

Spring

core documentation.

The following example sets the isolation, propagation, and timeout transaction

attributes in Java:

@Bean

public Step step1() {

DefaultTransactionAttribute attribute = new DefaultTransactionAttribute();

attribute.setPropagationBehavior(Propagation.REQUIRED.value());

attribute.setIsolationLevel(Isolation.DEFAULT.value());

attribute.setTimeout(30);

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(2)

.reader(itemReader())

.writer(itemWriter())

.transactionAttribute(attribute)

.build();

}. Registering ItemStream with a Step

The step has to take care of ItemStream callbacks at the necessary points in its

lifecycle (For more information on the ItemStream interface, see

ItemStream). This is vital if a step fails and might

need to be restarted, because the ItemStream interface is where the step gets the

information it needs about persistent state between executions.

If the ItemReader, ItemProcessor, or ItemWriter itself implements the ItemStream

interface, then these are registered automatically. Any other streams need to be

registered separately. This is often the case where indirect dependencies, such as

delegates, are injected into the reader and writer. A stream can be registered on the

step through the 'stream' element.

The following example shows how to register a stream on a step in Java:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(2)

.reader(itemReader())

.writer(compositeItemWriter())

.stream(fileItemWriter1())

.stream(fileItemWriter2())

.build();

}

/**

* In Spring Batch 4, the CompositeItemWriter implements ItemStream so this isn't

* necessary, but used for an example.

*/

@Bean

public CompositeItemWriter compositeItemWriter() {

List<ItemWriter> writers = new ArrayList<>(2);

writers.add(fileItemWriter1());

writers.add(fileItemWriter2());

CompositeItemWriter itemWriter = new CompositeItemWriter();

itemWriter.setDelegates(writers);

return itemWriter;

}In the example above, the CompositeItemWriter is not an ItemStream, but both of its

delegates are. Therefore, both delegate writers must be explicitly registered as streams

in order for the framework to handle them correctly. The ItemReader does not need to be

explicitly registered as a stream because it is a direct property of the Step. The step

is now restartable, and the state of the reader and writer is correctly persisted in the

event of a failure.

. Intercepting Step Execution

Just as with the Job, there are many events during the execution of a Step where a

user may need to perform some functionality. For example, in order to write out to a flat

file that requires a footer, the ItemWriter needs to be notified when the Step has

been completed, so that the footer can be written. This can be accomplished with one of many

Step scoped listeners.

Any class that implements one of the extensions of StepListener (but not that interface

itself since it is empty) can be applied to a step through the listeners element.

The listeners element is valid inside a step, tasklet, or chunk declaration. It is

recommended that you declare the listeners at the level at which its function applies,

or, if it is multi-featured (such as StepExecutionListener and ItemReadListener),

then declare it at the most granular level where it applies.

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(10)

.reader(reader())

.writer(writer())

.listener(chunkListener())

.build();

}An ItemReader, ItemWriter or ItemProcessor that itself implements one of the

StepListener interfaces is registered automatically with the Step if using the

namespace <step> element or one of the the *StepFactoryBean factories. This only

applies to components directly injected into the Step. If the listener is nested inside

another component, it needs to be explicitly registered (as described previously under

Registering ItemStream with a Step).

In addition to the StepListener interfaces, annotations are provided to address the

same concerns. Plain old Java objects can have methods with these annotations that are

then converted into the corresponding StepListener type. It is also common to annotate

custom implementations of chunk components such as ItemReader or ItemWriter or

Tasklet. The annotations are analyzed by the XML parser for the <listener/> elements

as well as registered with the listener methods in the builders, so all you need to do

is use the XML namespace or builders to register the listeners with a step.

StepExecutionListener

StepExecutionListener represents the most generic listener for Step execution. It

allows for notification before a Step is started and after it ends, whether it ended

normally or failed, as shown in the following example:

public interface StepExecutionListener extends StepListener {

void beforeStep(StepExecution stepExecution);

ExitStatus afterStep(StepExecution stepExecution);

}ExitStatus is the return type of afterStep in order to allow listeners the chance to

modify the exit code that is returned upon completion of a Step.

The annotations corresponding to this interface are:

-

@BeforeStep -

@AfterStep

ChunkListener

A chunk is defined as the items processed within the scope of a transaction. Committing a

transaction, at each commit interval, commits a 'chunk'. A ChunkListener can be used to

perform logic before a chunk begins processing or after a chunk has completed

successfully, as shown in the following interface definition:

public interface ChunkListener extends StepListener {

void beforeChunk(ChunkContext context);

void afterChunk(ChunkContext context);

void afterChunkError(ChunkContext context);

}The beforeChunk method is called after the transaction is started but before read is

called on the ItemReader. Conversely, afterChunk is called after the chunk has been

committed (and not at all if there is a rollback).

The annotations corresponding to this interface are:

- @BeforeChunk

- @AfterChunk

- @AfterChunkError

A ChunkListener can be applied when there is no chunk declaration. The TaskletStep is

responsible for calling the ChunkListener, so it applies to a non-item-oriented tasklet

as well (it is called before and after the tasklet).

ItemReadListener

When discussing skip logic previously, it was mentioned that it may be beneficial to log

the skipped records, so that they can be dealt with later. In the case of read errors,

this can be done with an ItemReaderListener, as shown in the following interface

definition:

public interface ItemReadListener<T> extends StepListener {

void beforeRead();

void afterRead(T item);

void onReadError(Exception ex);

}The beforeRead method is called before each call to read on the ItemReader. The

afterRead method is called after each successful call to read and is passed the item

that was read. If there was an error while reading, the onReadError method is called.

The exception encountered is provided so that it can be logged.

The annotations corresponding to this interface are:

- @BeforeRead

- @AfterRead

- @OnReadError

ItemProcessListener

Just as with the ItemReadListener, the processing of an item can be 'listened' to, as

shown in the following interface definition:

public interface ItemProcessListener<T, S> extends StepListener {

void beforeProcess(T item);

void afterProcess(T item, S result);

void onProcessError(T item, Exception e);

}The beforeProcess method is called before process on the ItemProcessor and is

handed the item that is to be processed. The afterProcess method is called after the

item has been successfully processed. If there was an error while processing, the

onProcessError method is called. The exception encountered and the item that was

attempted to be processed are provided, so that they can be logged.

The annotations corresponding to this interface are:

- @BeforeProcess

- @AfterProcess

- @OnProcessError

ItemWriteListener

The writing of an item can be 'listened' to with the ItemWriteListener, as shown in the

following interface definition:

public interface ItemWriteListener<S> extends StepListener {

void beforeWrite(List<? extends S> items);

void afterWrite(List<? extends S> items);

void onWriteError(Exception exception, List<? extends S> items);

}The beforeWrite method is called before write on the ItemWriter and is handed the

list of items that is written. The afterWrite method is called after the item has been

successfully written. If there was an error while writing, the onWriteError method is

called. The exception encountered and the item that was attempted to be written are

provided, so that they can be logged.

The annotations corresponding to this interface are:

- @BeforeWrite

- @AfterWrite

- @OnWriteError

SkipListener

ItemReadListener, ItemProcessListener, and ItemWriteListener all provide mechanisms

for being notified of errors, but none informs you that a record has actually been

skipped. onWriteError, for example, is called even if an item is retried and

successful. For this reason, there is a separate interface for tracking skipped items, as

shown in the following interface definition:

public interface SkipListener<T,S> extends StepListener {

void onSkipInRead(Throwable t);

void onSkipInProcess(T item, Throwable t);

void onSkipInWrite(S item, Throwable t);

}onSkipInRead is called whenever an item is skipped while reading. It should be noted

that rollbacks may cause the same item to be registered as skipped more than once.

onSkipInWrite is called when an item is skipped while writing. Because the item has

been read successfully (and not skipped), it is also provided the item itself as an

argument.

The annotations corresponding to this interface are:

- @OnSkipInRead

- @OnSkipInWrite

- @OnSkipInProcess

SkipListeners and Transactions

One of the most common use cases for a SkipListener is to log out a skipped item, so

that another batch process or even human process can be used to evaluate and fix the

issue leading to the skip. Because there are many cases in which the original transaction

may be rolled back, Spring Batch makes two guarantees:

-

The appropriate skip method (depending on when the error happened) is called only once per item.

-

The

SkipListeneris always called just before the transaction is committed. This is to ensure that any transactional resources call by the listener are not rolled back by a failure within theItemWriter.

TaskletStep

Chunk-oriented processing is not the only way to process in a

Step. What if a Step must consist of a simple stored procedure call? You could

implement the call as an ItemReader and return null after the procedure finishes.

However, doing so is a bit unnatural, since there would need to be a no-op ItemWriter.

Spring Batch provides the TaskletStep for this scenario.

Tasklet is a simple interface that has one method, execute, which is called

repeatedly by the TaskletStep until it either returns RepeatStatus.FINISHED or throws

an exception to signal a failure. Each call to a Tasklet is wrapped in a transaction.

Tasklet implementors might call a stored procedure, a script, or a simple SQL update

statement.

To create a TaskletStep in Java, the bean passed to the tasklet method of the builder

should implement the Tasklet interface. No call to chunk should be called when

building a TaskletStep. The following example shows a simple tasklet:

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.tasklet(myTasklet())

.build();

}|

|

Controlling Step Flow

With the ability to group steps together within an owning job comes the need to be able

to control how the job "flows" from one step to another. The failure of a Step does not

necessarily mean that the Job should fail. Furthermore, there may be more than one type

of 'success' that determines which Step should be executed next. Depending upon how a

group of Steps is configured, certain steps may not even be processed at all.

. Sequential Flow

The simplest flow scenario is a job where all of the steps execute sequentially, as shown in the following image:

This can be achieved by using the 'next' in a step.

The following example shows how to use the next() method in Java:

@Bean

public Job job() {

return this.jobBuilderFactory.get("job")

.start(stepA())

.next(stepB())

.next(stepC())

.build();

}In the scenario above, 'step A' runs first because it is the first Step listed. If

'step A' completes normally, then 'step B' runs, and so on. However, if 'step A' fails,

then the entire Job fails and 'step B' does not execute.

ItemReaders and ItemWriters

All batch processing can be described in its most simple form as reading in large amounts

of data, performing some type of calculation or transformation, and writing the result

out. Spring Batch provides three key interfaces to help perform bulk reading and writing:

ItemReader, ItemProcessor, and ItemWriter.

.1. ItemReader

Although a simple concept, an ItemReader is the means for providing data from many

different types of input. The most general examples include:

- Flat File: Flat-file item readers read lines of data from a flat file that typically describes records with fields of data defined by fixed positions in the file or delimited by some special character (such as a comma).

- XML: XML

ItemReadersprocess XML independently of technologies used for parsing, mapping and validating objects. Input data allows for the validation of an XML file against an XSD schema. - Database: A database resource is accessed to return resultsets which can be mapped to

objects for processing. The default SQL

ItemReaderimplementations invoke aRowMapperto return objects, keep track of the current row if restart is required, store basic statistics, and provide some transaction enhancements that are explained later.

There are many more possibilities, but we focus on the basic ones for this chapter. A

complete list of all available ItemReader implementations can be found in

Appendix A.

ItemReader is a basic interface for generic

input operations, as shown in the following interface definition:

public interface ItemReader<T> {

T read() throws Exception, UnexpectedInputException, ParseException, NonTransientResourceException;

}The read method defines the most essential contract of the ItemReader. Calling it

returns one item or null if no more items are left. An item might represent a line in a

file, a row in a database, or an element in an XML file. It is generally expected that

these are mapped to a usable domain object (such as Trade, Foo, or others), but there

is no requirement in the contract to do so.

It is expected that implementations of the ItemReader interface are forward only.

However, if the underlying resource is transactional (such as a JMS queue) then calling

read may return the same logical item on subsequent calls in a rollback scenario. It is

also worth noting that a lack of items to process by an ItemReader does not cause an

exception to be thrown. For example, a database ItemReader that is configured with a

query that returns 0 results returns null on the first invocation of read.

.2. ItemWriter

ItemWriter is similar in functionality to an ItemReader but with inverse operations.

Resources still need to be located, opened, and closed but they differ in that an

ItemWriter writes out, rather than reading in. In the case of databases or queues,

these operations may be inserts, updates, or sends. The format of the serialization of

the output is specific to each batch job.

As with ItemReader,

ItemWriter is a fairly generic interface, as shown in the following interface definition:

public interface ItemWriter<T> {

void write(List<? extends T> items) throws Exception;

}As with read on ItemReader, write provides the basic contract of ItemWriter. It

attempts to write out the list of items passed in as long as it is open. Because it is

generally expected that items are 'batched' together into a chunk and then output, the

interface accepts a list of items, rather than an item by itself. After writing out the

list, any flushing that may be necessary can be performed before returning from the write

method. For example, if writing to a Hibernate DAO, multiple calls to write can be made,

one for each item. The writer can then call flush on the hibernate session before

returning.

.3. ItemStream

Both ItemReaders and ItemWriters serve their individual purposes well, but there is a

common concern among both of them that necessitates another interface. In general, as

part of the scope of a batch job, readers and writers need to be opened, closed, and

require a mechanism for persisting state. The ItemStream interface serves that purpose,

as shown in the following example:

public interface ItemStream {

void open(ExecutionContext executionContext) throws ItemStreamException;

void update(ExecutionContext executionContext) throws ItemStreamException;

void close() throws ItemStreamException;

}Before describing each method, we should mention the ExecutionContext. Clients of an

ItemReader that also implement ItemStream should call open before any calls to

read, in order to open any resources such as files or to obtain connections. A similar

restriction applies to an ItemWriter that implements ItemStream. As mentioned in

Chapter 2, if expected data is found in the ExecutionContext, it may be used to start

the ItemReader or ItemWriter at a location other than its initial state. Conversely,

close is called to ensure that any resources allocated during open are released safely.

update is called primarily to ensure that any state currently being held is loaded into

the provided ExecutionContext. This method is called before committing, to ensure that

the current state is persisted in the database before commit.

In the special case where the client of an ItemStream is a Step (from the Spring

Batch Core), an ExecutionContext is created for each StepExecution to allow users to

store the state of a particular execution, with the expectation that it is returned if

the same JobInstance is started again. For those familiar with Quartz, the semantics

are very similar to a Quartz JobDataMap.

.4. The Delegate Pattern and Registering with the Step

Note that the CompositeItemWriter is an example of the delegation pattern, which is

common in Spring Batch. The delegates themselves might implement callback interfaces,

such as StepListener. If they do and if they are being used in conjunction with Spring

Batch Core as part of a Step in a Job, then they almost certainly need to be

registered manually with the Step. A reader, writer, or processor that is directly

wired into the Step gets registered automatically if it implements ItemStream or a

StepListener interface. However, because the delegates are not known to the Step,

they need to be injected as listeners or streams (or both if appropriate).

@Bean

public Job ioSampleJob() {

return this.jobBuilderFactory.get("ioSampleJob")

.start(step1())

.build();

}

@Bean

public Step step1() {

return this.stepBuilderFactory.get("step1")

.<String, String>chunk(2)

.reader(fooReader())

.processor(fooProcessor())

.writer(compositeItemWriter())

.stream(barWriter())

.build();

}

@Bean

public CustomCompositeItemWriter compositeItemWriter() {

CustomCompositeItemWriter writer = new CustomCompositeItemWriter();

writer.setDelegate(barWriter());

return writer;

}

@Bean

public BarWriter barWriter() {

return new BarWriter();

}.5. Flat Files

One of the most common mechanisms for interchanging bulk data has always been the flat file. Unlike XML, which has an agreed upon standard for defining how it is structured (XSD), anyone reading a flat file must understand ahead of time exactly how the file is structured. In general, all flat files fall into two types: delimited and fixed length. Delimited files are those in which fields are separated by a delimiter, such as a comma. Fixed Length files have fields that are a set length.

.5.1. The FieldSet

When working with flat files in Spring Batch, regardless of whether it is for input or

output, one of the most important classes is the FieldSet. Many architectures and

libraries contain abstractions for helping you read in from a file, but they usually

return a String or an array of String objects. This really only gets you halfway

there. A FieldSet is Spring Batch’s abstraction for enabling the binding of fields from

a file resource. It allows developers to work with file input in much the same way as

they would work with database input. A FieldSet is conceptually similar to a JDBC

ResultSet. A FieldSet requires only one argument: a String array of tokens.

Optionally, you can also configure the names of the fields so that the fields may be

accessed either by index or name as patterned after ResultSet, as shown in the following

example:

String[] tokens = new String[]{"foo", "1", "true"};

FieldSet fs = new DefaultFieldSet(tokens);

String name = fs.readString(0);

int value = fs.readInt(1);

boolean booleanValue = fs.readBoolean(2);There are many more options on the FieldSet interface, such as Date, long,

BigDecimal, and so on. The biggest advantage of the FieldSet is that it provides

consistent parsing of flat file input. Rather than each batch job parsing differently in

potentially unexpected ways, it can be consistent, both when handling errors caused by a

format exception, or when doing simple data conversions.

.5.2. FlatFileItemReader

A flat file is any type of file that contains at most two-dimensional (tabular) data.

Reading flat files in the Spring Batch framework is facilitated by the class called

FlatFileItemReader, which provides basic functionality for reading and parsing flat

files. The two most important required dependencies of FlatFileItemReader are

Resource and LineMapper. The LineMapper interface is explored more in the next

sections. The resource property represents a Spring Core Resource. Documentation

explaining how to create beans of this type can be found in

Spring

Framework, Chapter 5. Resources. Therefore, this guide does not go into the details of

creating Resource objects beyond showing the following simple example:

Resource resource = new FileSystemResource("resources/trades.csv");In complex batch environments, the directory structures are often managed by the Enterprise Application Integration (EAI) infrastructure, where drop zones for external interfaces are established for moving files from FTP locations to batch processing locations and vice versa. File moving utilities are beyond the scope of the Spring Batch architecture, but it is not unusual for batch job streams to include file moving utilities as steps in the job stream. The batch architecture only needs to know how to locate the files to be processed. Spring Batch begins the process of feeding the data into the pipe from this starting point. However, Spring Integration provides many of these types of services.

The other properties in FlatFileItemReader let you further specify how your data is

interpreted, as described in the following table:

| Property | Type | Description |

|---|---|---|

comments |

String[] |

Specifies line prefixes that indicate comment rows. |

encoding |

String |

Specifies what text encoding to use. The default is the value of |

lineMapper |

|

Converts a |

linesToSkip |

int |

Number of lines to ignore at the top of the file. |

recordSeparatorPolicy |

RecordSeparatorPolicy |

Used to determine where the line endings are and do things like continue over a line ending if inside a quoted string. |

resource |

|

The resource from which to read. |

skippedLinesCallback |

LineCallbackHandler |

Interface that passes the raw line content of

the lines in the file to be skipped. If |

strict |

boolean |

In strict mode, the reader throws an exception on |

LineMapper

As with RowMapper, which takes a low-level construct such as ResultSet and returns